The history of artificial intelligence is a story of big dreams, bigger disappointments, and eventual breakthroughs that nobody saw coming. It’s a story of researchers promising thinking machines “within a generation” (starting in the 1950s), of funding booms and devastating busts, and of sudden explosions of capability that caught even experts off guard.

Understanding this history isn’t just trivia. It helps you put today’s AI excitement in context—and gives you a healthy skepticism for anyone claiming to know exactly where this is all going.

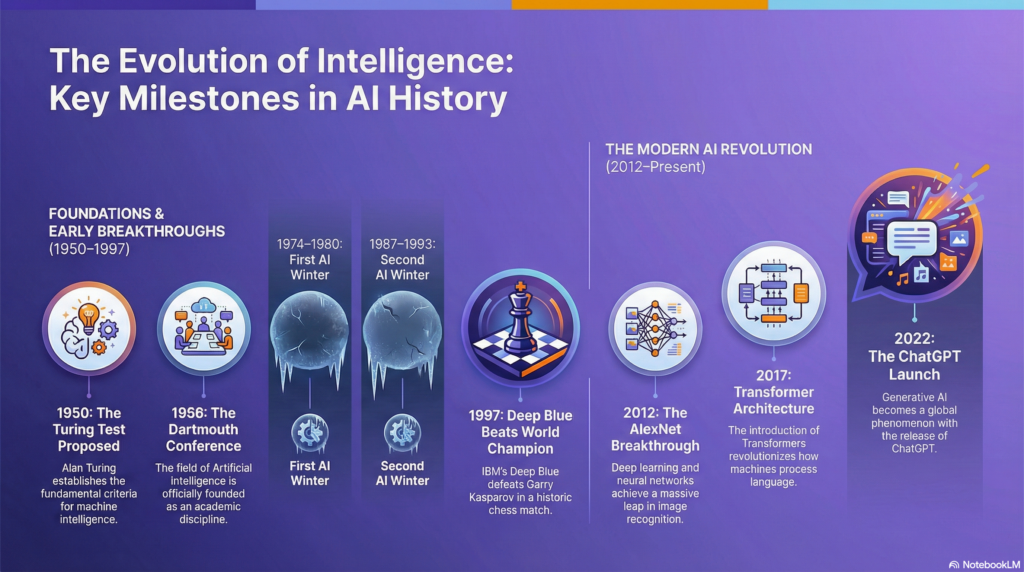

Timeline Overview

- The Dream Before the Machine (1940s-1950s)

- The Early Optimism (1956-1974)

- The First AI Winter (1974-1980)

- Expert Systems Era (1980-1987)

- The Second AI Winter (1987-1993)

- Machine Learning Rises (1993-2011)

- The Deep Learning Revolution (2012-2022)

- The ChatGPT Moment and Beyond (2022-Present)

- Key Themes and Lessons

Section 1: The Dream Before the Machine (1940s-1950s)

The dream of artificial intelligence is as old as human storytelling—think of the Golem in Jewish folklore or the automata of Greek mythology. But the practical possibility only emerged with the invention of electronic computers during World War II.

Alan Turing and the Question of Machine Intelligence

In 1950, British mathematician Alan Turing published a paper titled “Computing Machinery and Intelligence” that asked a simple question: Can machines think?

Rather than getting lost in philosophical debates about consciousness, Turing proposed a practical test: if a machine could carry on a conversation so convincingly that a human judge couldn’t tell whether they were talking to a human or a machine, we should consider the machine intelligent. This became known as the “Turing Test.”

Turing also made a prediction: he believed that by the year 2000, machines would be able to fool average interrogators about 30% of the time in five-minute conversations. It’s worth noting that modern chatbots can certainly do this—though whether that means they’re “thinking” remains debated.

The Dartmouth Conference: AI Gets Its Name

In the summer of 1956, a small group of researchers gathered at Dartmouth College for what would become the founding moment of AI as a field. Organized by John McCarthy, Marvin Minsky, Claude Shannon, and Nathaniel Rochester, the workshop had an ambitious proposal:

“We propose that a 2 month, 10 man study of artificial intelligence be carried out… The study is to proceed on the basis of the conjecture that every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it.”

This is where the term “artificial intelligence” was coined. The researchers were optimistic—perhaps wildly so. McCarthy believed that significant progress could be made in a single summer. Instead, they’d be working on the problem for the rest of their careers.

Section 2: The Early Optimism (1956-1974)

The years following Dartmouth saw rapid progress and even more rapid predictions. Researchers created programs that could prove mathematical theorems, play checkers competitively, and even engage in simple conversations.

Early Achievements

Logic Theorist (1956): Created by Allen Newell and Herbert Simon, this program could prove mathematical theorems from Principia Mathematica. It’s sometimes called the first AI program.

General Problem Solver (1959): Newell and Simon’s attempt to create a universal problem-solving machine. It could solve puzzles like the Tower of Hanoi but struggled with real-world complexity.

ELIZA (1966): Created by Joseph Weizenbaum at MIT, ELIZA simulated a psychotherapist using simple pattern matching. Users found it surprisingly engaging—some even developed emotional connections to it. Weizenbaum was disturbed by this and became an AI skeptic.

SHRDLU (1970): Terry Winograd’s program could understand and respond to English commands about a simple virtual world of colored blocks. It demonstrated natural language understanding—within very narrow limits.

The Predictions

Buoyed by early successes, researchers made bold predictions:

Herbert Simon (1958): “Within ten years a digital computer will be the world’s chess champion.” (It took 39 years.)

Marvin Minsky (1967): “Within a generation… the problem of creating ‘artificial intelligence’ will substantially be solved.”

These predictions reflected genuine optimism, not mere hype. Early programs showed that computers could do things previously thought uniquely human. It seemed like human-level AI was just a matter of scaling up.

Why They Were Wrong (The First Time)

The problem was that early AI programs were brittle. They worked beautifully in narrow, well-defined domains and failed catastrophically in the messy real world. SHRDLU could discuss colored blocks eloquently but couldn’t handle a conversation about anything else.

Researchers discovered that the “easy” problems (recognizing faces, understanding language, navigating a room) were actually much harder than the “hard” problems (playing chess, proving theorems). This became known as Moravec’s Paradox: “It is comparatively easy to make computers exhibit adult-level performance on intelligence tests or playing checkers, and difficult or impossible to give them the skills of a one-year-old when it comes to perception and mobility.”

Section 3: The First AI Winter (1974-1980)

By the early 1970s, the gap between AI promises and AI reality had become impossible to ignore. A series of critical reports—most notably the Lighthill Report in the UK (1973)—concluded that AI had failed to deliver on its ambitious goals.

The consequences were severe:

- Government funding for AI research was slashed dramatically

- Academic positions became scarce

- The term “AI” became toxic—researchers started avoiding it

- Many projects were abandoned

This period became known as the “AI Winter”—a term that would be used again.

The fundamental problem was that early AI relied on symbolic approaches—trying to encode human knowledge as explicit rules. But the real world has too many rules, too many exceptions, and too much context dependency to capture this way. A child learns language naturally; encoding all the rules of English proved essentially impossible.

Section 4: Expert Systems Era (1980-1987)

AI found new life in the 1980s through “expert systems”—programs designed to capture the knowledge of human experts in specific domains. Instead of trying to create general intelligence, researchers focused on narrow, practical applications.

The most famous example was MYCIN (developed in the 1970s but influential in the 1980s), which could diagnose bacterial infections and recommend antibiotics. Other expert systems helped configure computer systems, analyze geological data, and assist with various industrial processes.

The business world took notice. Companies invested heavily in expert systems, and a new industry emerged. Some systems delivered genuine value. The hype returned.

But expert systems had fundamental limitations:

- Knowledge acquisition was painfully slow—extracting expert knowledge took months or years

- Systems were brittle—they couldn’t handle situations outside their programmed rules

- Maintenance was difficult—rules needed constant updating

- Integration with existing systems was complex

The expert systems boom created expectations that couldn’t be sustained.

Section 5: The Second AI Winter (1987-1993)

The expert systems market collapsed in the late 1980s. The specialized hardware that ran these systems became obsolete as desktop computers grew more powerful. The promised returns on AI investments failed to materialize. Companies that had bet big on AI faced losses.

Once again, AI became a tainted term. Researchers rebranded their work: “machine learning” instead of “AI,” “cognitive systems” instead of “intelligent systems.” Funding dried up. The field retreated to academic laboratories.

But this time, something different was happening in the background. Researchers were quietly developing new approaches—statistical methods, neural networks, machine learning techniques—that would eventually revolutionize the field. The seeds of today’s AI were being planted during the winter.

Section 6: Machine Learning Rises (1993-2011)

The 1990s and 2000s saw a fundamental shift in AI: from rule-based systems to statistical, data-driven approaches. Instead of trying to program intelligence explicitly, researchers started letting machines learn patterns from data.

Key Milestones

Deep Blue Defeats Kasparov (1997): IBM’s chess computer beat the world champion, Garry Kasparov. This was a symbolic milestone—machines could now beat humans at the “intellectual” game. But Deep Blue used brute-force search and expert-programmed evaluation functions, not learning. It was impressive engineering, but not quite the breakthrough it seemed.

Statistical Machine Translation: Services like Google Translate (2006) showed that statistical approaches could handle language tasks that had stymied traditional AI. Instead of encoding grammar rules, these systems learned patterns from millions of translated documents.

Netflix Prize (2006-2009): Netflix offered $1 million for anyone who could improve their recommendation algorithm by 10%. The competition demonstrated the power of machine learning and attracted talent to the field.

IBM Watson Wins Jeopardy! (2011): Watson defeated human champions at Jeopardy!, demonstrating natural language understanding, information retrieval, and quick reasoning. It was a technical tour de force—though Watson’s subsequent commercial applications proved disappointing.

The Neural Network Renaissance

Neural networks—computing systems loosely inspired by biological brains—had existed since the 1940s but had largely been abandoned. They required too much computing power and too much data to work well. But some researchers, including Geoffrey Hinton, Yann LeCun, and Yoshua Bengio, kept the faith.

By the late 2000s, the conditions for a neural network breakthrough were falling into place: massive datasets were becoming available (thanks to the internet), computing power was exploding (especially GPUs designed for video games), and new training techniques made deep networks more practical.

Section 7: The Deep Learning Revolution (2012-2022)

2012 was the year everything changed. A neural network called AlexNet, created by Alex Krizhevsky and supervised by Geoffrey Hinton, won the ImageNet competition—a prestigious benchmark for image recognition—by a huge margin. It wasn’t a marginal improvement; it was a paradigm shift.

AlexNet showed that deep neural networks (networks with many layers) could achieve results that seemed impossible just years before. The technique worked because of three factors converging: big data (millions of labeled images), powerful GPUs (NVIDIA graphics cards), and improved algorithms (especially for training deep networks).

The Floodgates Open

After 2012, progress was startling:

Image Recognition: By 2015, neural networks surpassed human-level accuracy on ImageNet. Computers could identify objects in images better than people.

Speech Recognition: Virtual assistants like Siri, Alexa, and Google Assistant became practical. Error rates dropped dramatically.

AlphaGo (2016): DeepMind’s program defeated the world champion at Go—a game with more possible positions than atoms in the observable universe. Unlike chess, Go couldn’t be solved through brute force. AlphaGo had to develop something resembling intuition.

AlphaFold (2020): DeepMind’s protein-folding AI solved a 50-year-old problem in biology, predicting protein structures with unprecedented accuracy. This has practical implications for drug discovery and biological research.

The Transformer Revolution

In 2017, researchers at Google published a paper titled “Attention Is All You Need.” It introduced the transformer architecture—a new way of processing sequential data that would become the foundation for modern language AI.

Transformers use “attention mechanisms” that let the model consider all parts of the input simultaneously, rather than processing it sequentially. This made them much better at understanding context and relationships in language.

OpenAI took transformers and scaled them up. GPT-1 (2018) had 117 million parameters. GPT-2 (2019) had 1.5 billion—OpenAI initially declined to release it fully, citing concerns about misuse. GPT-3 (2020) had 175 billion parameters and showed capabilities that surprised even its creators: it could write essays, code, poetry, and carry on conversations without being specifically trained for any of these tasks.

Image Generation Emerges

While language models grabbed headlines, image generation was advancing rapidly:

GANs (2014): Generative Adversarial Networks pitted two neural networks against each other—one generating images, one trying to detect fakes. This competition produced increasingly realistic synthetic images.

DALL-E (2021): OpenAI showed that transformers could generate images from text descriptions. Type “an astronaut riding a horse in the style of Picasso” and get exactly that.

Stable Diffusion (2022): An open-source image generation model that could run on consumer hardware. Suddenly, anyone could generate AI images.

Section 8: The ChatGPT Moment and Beyond (2022-Present)

On November 30, 2022, OpenAI released ChatGPT. Within five days, it had over a million users. Within two months, it was the fastest-growing consumer application in history.

ChatGPT wasn’t a fundamental technical breakthrough—it was GPT-3.5 with a conversational interface and fine-tuning to be helpful and safe. But its accessibility changed everything. Suddenly, anyone with an internet connection could have a conversation with a powerful AI. Teachers, programmers, writers, students, and curious people everywhere discovered what large language models could do.

The Competition Explodes

ChatGPT’s success triggered an AI arms race:

Google: Rushed to release Bard (later renamed Gemini), though its initial launch was marred by factual errors. Google has since invested heavily in AI, integrating it across products.

Anthropic: Founded by former OpenAI researchers concerned about AI safety, Anthropic released Claude. The company focuses on building AI that’s helpful, harmless, and honest, with strong emphasis on safety research.

Meta: Released Llama as an open-source model, enabling researchers and companies to build on it. Llama has spawned an entire ecosystem of fine-tuned variants and applications.

Mistral: A French startup creating smaller, efficient models that compete with much larger ones. Represents the European push for AI independence.

Microsoft: Invested billions in OpenAI and integrated GPT-4 into Bing, Office, and Windows. Repositioned itself as an AI-first company.

GPT-4 and Beyond

In March 2023, OpenAI released GPT-4—a significant leap in capabilities. It could pass bar exams, score well on medical licensing tests, and handle complex reasoning tasks that stumped earlier models. Critically, GPT-4 was multimodal—it could understand images as well as text.

Since then, we’ve seen:

- Rapid improvement in model capabilities across providers

- Claude expanding to very large context windows (200,000+ tokens)

- Open-source models approaching closed-source performance

- AI image and video generation becoming remarkably sophisticated

- AI coding assistants becoming standard developer tools

- Integration of AI into search engines, productivity software, and consumer apps

The Open Source vs. Closed Source Debate

A major tension has emerged between open and closed approaches:

Closed source (OpenAI, Anthropic, Google): Keep model weights private, accessible only via API. Arguments for: safety (can’t be modified for misuse), competitive advantage, control over deployment.

Open source (Meta’s Llama, Mistral, Stability AI): Release model weights publicly. Arguments for: democratization, research progress, independence from big tech, ability to run locally.

This debate remains unresolved and will likely shape AI’s future development.

Section 9: Key Themes and Lessons from AI History

Looking back at 80 years of AI history, some patterns emerge:

1. Predictions Are Usually Wrong

From “thinking machines in a generation” (1960s) to “AI winter forever” (1980s) to “GPT-3 is the ceiling” (2021), experts have consistently failed to predict AI’s trajectory. Humility about the future seems warranted.

2. Progress Comes in Bursts

AI development isn’t linear. Long periods of incremental progress are punctuated by sudden breakthroughs (AlexNet, transformers, ChatGPT) that shift the entire landscape. The next breakthrough could come tomorrow or in a decade.

3. Compute and Data Matter More Than Clever Algorithms

Many of today’s techniques existed for decades before they worked. What changed was having enough data and computing power to make them practical. The “unreasonable effectiveness of data” has been a defining theme.

4. Narrow AI Succeeds; General AI Remains Elusive

Every successful AI application has been narrow—designed for specific tasks. Despite decades of trying, general-purpose AI that can handle any intellectual task remains a goal, not a reality. Current LLMs are impressive generalists, but they’re still fundamentally prediction machines with significant limitations.

5. The Hardest Problems Are Often Unexpected

Nobody predicted that image recognition would be solved before commonsense reasoning, or that generating photorealistic images would be easier than reliable arithmetic. AI has consistently surprised us about what’s easy and what’s hard.

Where We Are Now

As of late 2024, we’re in an unprecedented period of AI capability and uncertainty:

What’s Clear:

- Large language models are genuinely useful for many tasks

- AI can now generate convincing text, images, audio, and video

- Integration of AI into everyday tools is accelerating

- The economic and social impacts are just beginning

What’s Unclear:

- Whether current approaches will lead to further breakthroughs or hit walls

- How to address AI safety, alignment, and misuse concerns

- What the long-term economic impacts will be

- Whether we’re heading toward more capable AI or another winter

What to Watch:

- Progress (or lack thereof) in AI reasoning and reliability

- The open source vs. closed source competition

- Regulatory responses around the world

- Energy and compute constraints

- Real-world application results beyond demos

Continue Your Journey

For quick definitions: Check the AI Glossary for any terms that are still fuzzy.

For practical understanding: Read AI Concepts Explained to understand how today’s AI actually works.

For staying current: Subscribe to the Weekly AI Digest for updates as this rapidly evolving story continues.

Last updated: [Date]

Sources and further reading available upon request. This page will be updated as AI history continues to unfold.